Setting realistic deadlines at Divvy

Bruce Weller

VP of Engineering, Divvy

In this interview, Bruce Weller, VP of Engineering at Divvy (acquired by Bill.com), describes the practices he coaches teams on to help them set clear and realistic deadlines.

Here’s Bruce.

Missed deadlines are often the result of simple miscommunications. I’ve seen it throughout my career: a team didn’t separate their “estimate” from the “deadline”, didn’t discuss the cost of failure, or didn’t agree on the definition of done. They “missed” the deadline because there wasn’t full clarity around what the deadline meant.

At Divvy we’ve written these practices down and coach managers to follow these practices. We’ve gotten a lot better as a team about hitting deadlines.

1. Separate “estimates” from “deadlines”

There’s a difference between an estimate and a deadline:

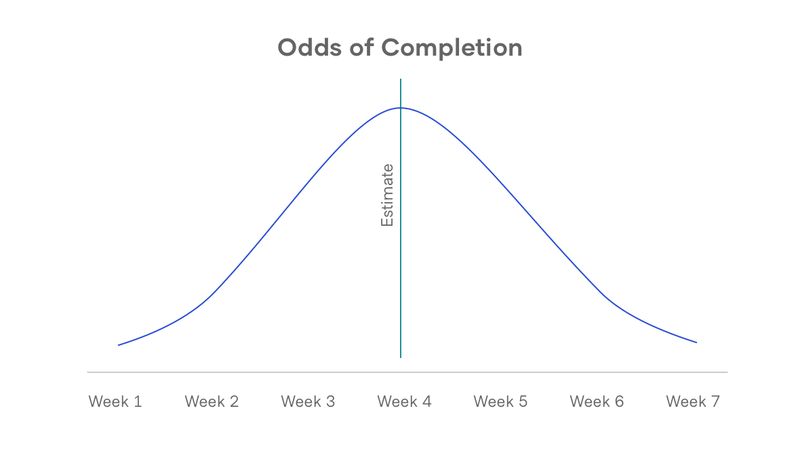

An estimate is your closest approximation of when something might get completed. If you picture a standard bell curve, where the top of the bell is your estimate, what you see is that most things get completed either a little before or a little after the estimate. The important thing to note is that “a little after” or “a little before” are both perfectly valid outcomes.

But oftentimes when people say “estimate” they’re actually talking about a “deadline”. And as soon as we’re talking about a deadline, we’re talking about a date where the work is ideally completed “ahead” of time rather than behind.

Picture the bell curve again. Where with an estimate you’d expect 50% of your projects to be completed before and 50% after the date, with a deadline you’d slide that date back to a confidence level you’re comfortable with. (e.g., a 90% chance the work is completed before the deadline, 80% or 70%.) For deadlines, we take the estimate and slide it back to a point where there’s a higher probability of the work being completed “before” that date.

2. Discuss the cost of failure

Teams should decide what level of confidence they’re comfortable working with. Do you want 70% of your projects to be completed before the deadline? 80%? 90%? That’s a decision teams can agree on. They should also reevaluate that level of confidence for “big” projects.

We can come to a specific level of confidence by considering the cost of failure for a given project. When I explain what the cost of failure is with teams, I use an analogy: Would you rather walk the length of a ten-foot plank that is four inches wide, or that is eight inches wide? Of course, we would all take the eight inches if we are trying to succeed.

Now let’s place the four-inch plank two feet off the ground and the eight-inch plank twenty feet off the ground. Make your choice again. Same thing with the plank at one hundred feet. You get the idea.

Which height best represents your project? What is the cost of failure? Is this a “bet the company” project? If so, you’ll want more certainty.

3. Agree on what is “complete” at the deadline

Get on the same page about what will be complete at the deadline. Is the project through testing, is it through production, does it mean we’re A/B testing with customers?

I’ve seen developers believe they are aiming for “code complete”, when stakeholders were expecting deployed features available in production. Make sure everyone knows the definition of “complete.”

4. Communicate the stages of an estimate

One way teams can be more clear in their communication is to use the terms “rough estimate,” and “firm estimate.”

I want a low barrier for Product to ask Engineering for an estimate. I want them to come to us early. Maybe they ask “Hey, what would it take to get this feature?” We want to partner and also to protect against misunderstandings. If they give me a rough spec (which could be just a sentence), we can give them a rough estimate. Dropping the word “rough” can get us into trouble — it might go to the marketing team or the executive team — but using the word “rough” gives us flexibility.

So Product comes back with more information, “We figured this out, here are some bullet points of what we’re going to need.” We look at it and say, “Oh, we thought you were going to need this and it’s not there, so we can actually reduce the rough estimate a bit.” Product goes off and comes back with a more refined spec, now there’s an integration we weren’t anticipating. We can say, “let’s change the rough estimate to reflect that.”

What we get through this process of refining specs and estimates is a funneling process. And at a certain point, we’ll be in agreement of what the spec is, and what the estimate is. We’ll be expecting little change from this point. Now we switch to the terms “firm spec” and “firm estimate”. And from the firm estimate we can build a deadline.

The team knows the deadline is important to hit. We all expect to hit it. If we miss a deadline, we hold a post-mortem and learn from the experience.

If missing deadlines is a pattern, then we want to understand why. What’s the failure in the process? Is there a skill that’s missing from the squad so they end up waiting for someone else? Is there miscommunication around the deadline or are the requirements not fully clear?

If a team has a pattern of delivering and then has a miss, we don’t punish them — why punish someone right at the moment they’ve gotten smarter? But we do want to dig into the root cause and see what we can learn and adjust.

5. Allow pushback

We don’t hire software developers for their proficiency at public speaking. Some of them are naturally great at it, but not all of them. Product Managers, on the other hand, must be proficient with this skill. They use it to motivate people, get members of a group on the same page, communicate progress, and build support for their initiatives.

Due to this mismatch, an anti-pattern that sometimes emerges is a Product Review where Product speaks for Engineering. This can lead to a misunderstanding about estimates and deadlines and an incomplete commitment to a deadline. Our groups have worked together to keep this from happening.

One trick we’ve discovered is for the Product and Engineers leaders to do a dry run of the Product Review in advance of the actual meeting. This lets everyone see the deck and voice concerns if anything is off. It’s a low-stress environment where engineers feel more comfortable pushing back.

6. Track say:do ratios

As part of our Product Reviews, teams share their say:do ratio. They feel a sense of pride in it, and get to brag, “Here’s our say:do ratio; we hit our last four deadlines.” Time ranges can be flexible. The point is to give teams a reason to track and a forum to brag about making and meeting commitments. (Teams get to brag about quality too, but that might be another post.)

Parting words

I’m big on autonomy, which means teams get to make their own decisions and they also get to fix things when they go off the rails. Estimates and deadlines are discussed openly and constantly. They are focused on. And, that being the case, our squads and squad leaders naturally get better at them with each trip through the process.