The case against cycle time

Many organizations use cycle time as a measure of their engineering performance. But this is something I recommend against.

One problem with using cycle time as a performance measure is that it is only useful in the extremes. Take, for example, a cloud service team that has an average cycle time of 3 months—reducing this team’s cycle time is probably a good idea. Now instead, take a more typical team that has an average cycle time of 4 days. Would further optimizing cycle time provide any benefit?

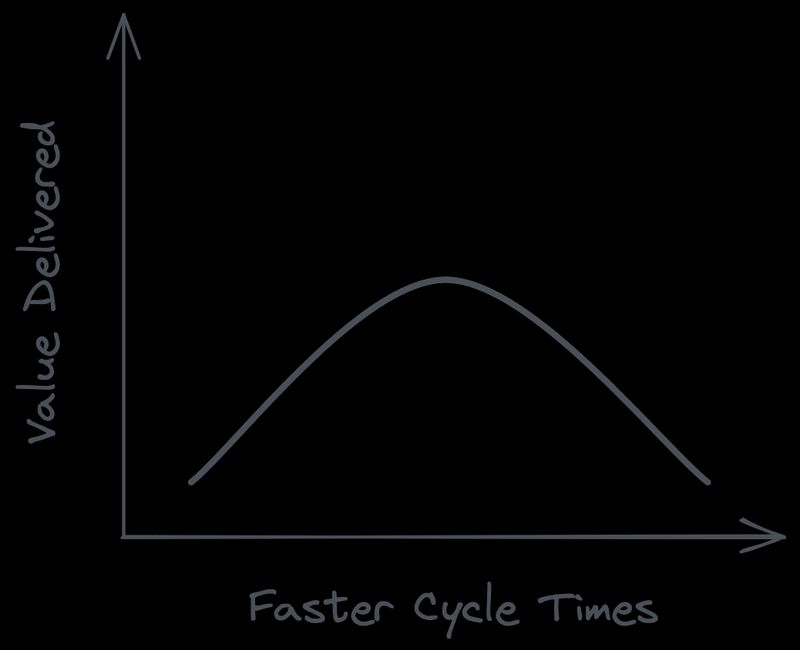

Although perpetually reducing cycle times may feel like improvement, if all you are doing is rearranging work into smaller pieces, then you are not increasing net value delivered to customers. To put it another way: delivering 2 units of software twice per week isn’t necessarily better than delivering 4 units of software once per week, especially if breaking down work unnecessarily causes more toil for developers. We can visualize this effect using an inverted J curve:

Another problem with using cycle time as a performance measure is that it doesn’t capture speed in the way leaders often intend. To use an analogy, in cycling, your speed is a function of cadence (how fast you pedal) multiplied by power (the amount of force in each pedal stroke). In software development, cycle time tells us the delivery cadence, but not how much work gets delivered in each cycle (which is famously difficult to measure with software).

When leaders push teams to accelerate cycle times, teams may end up pedaling faster without actually delivering more. And when leaders compare cycle times across teams without factoring in batch size, they may derive false conclusions about teams’ performance.

To sum it up, there are cases in which individual teams may find cycle time useful. However, using cycle time as a top-level performance measure that is pushed onto all teams is counterproductive. To actually improve performance, leaders should focus on measuring the friction experienced by developers and removing the bottlenecks that slow them down.