How DevProd teams can use surveys to inform their roadmaps

Brook Perry

Head of Marketing

A common pitfall for DevEx teams is to make assumptions about what developers need. This might show up in a couple of ways: they go heads-down on a project for weeks or more, release what they’ve built — and no one uses it. Or others in engineering, leadership or the engineering teams, don’t understand what the DevEx team does. These are signs the team hasn’t created a consistent feedback loop with engineering, and as a result is either creating the wrong solutions or solving the wrong problems.

Two-way communication can be the reason a DevEx team grows or shrinks; it’s the bottom line for a successful developer experience program. Two-way communication means listening to developers and communicating back what the team is working on. This post will share some of what we’ve learned about setting up channels for giving and receiving information.

Start with surveys

Leaders most frequently learn about pain points through one-on-ones, but it’s hard to tell how widespread problems actually are. A developer experience survey (sometimes known as a “developer productivity survey”) systematizes the process of gathering data about what tools or processes are and aren’t serving developers’ needs.

The results will help DevEx teams feel confident they’re focused on the highest priority problems.

Topics to gather data on

Instead of using open-ended questions, ask targeted questions. This provides more accurate and precise responses.

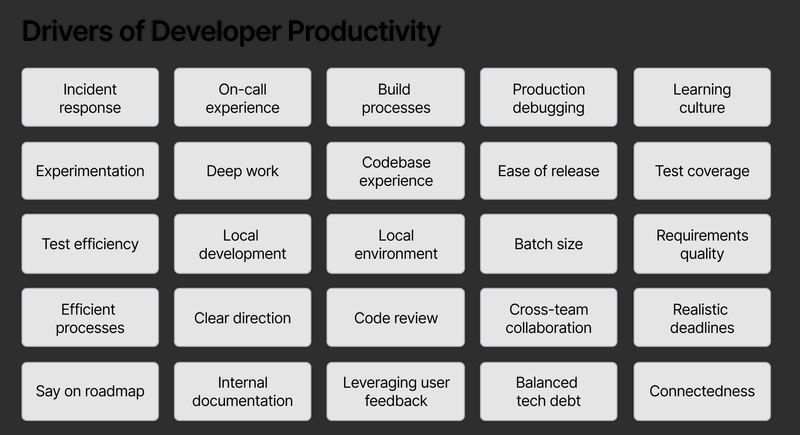

As for choosing what to measure, there is a substantial body of research that examines factors that drive developer productivity. This research can be helpful in providing initial ideas of topics to focus on. Researchers from DX have published several research papers specific to developer experience: one identifies 25 top factors affecting developer experience, and another provides a practical framework for identifying what to measure. DevEx teams can use these resources for inspiration, and select topics that they can take action on.

Types of data to gather

A basic developer experience survey will capture how satisfied developers are with the topics above (again: only including topics the team is interested in or can take action on in the survey, not the full list). A more advanced developer experience survey also gathers objective data about each of the topics above.

One misconception about surveys is that they’re only able to capture subjective data. Here’s a quick explanation on the difference between subjective and objective data, and why a survey should capture both:

The difference between subjective and objective data: A subjective measure is a measurement of someone’s opinions or attitudes about something. Think: satisfaction about a topic or ease of use for a tool. An objective measure is a measurement of facts or events, for example how long something takes or how frequently something occurs.

Why capture subjective and objective data: Together, subjective and objective measures can give a more holistic picture of the developer experience, showing both what is actually happening and whether it is good or bad. Objective data would show how long builds take, or how long the code review process takes. Subjective data would show how satisfied developers are with build times or the code review process.

Some scenarios that help explain why capturing both lenses is important:

- We can see (with objective data) that two different teams have a pull request cycle time of 24 hours. When we talk to those two teams, we hear different stories: one team is frustrated with the process — they spend too much time waiting. But for the other team, it’s fine, they have a process where they work on tasks in parallel and the 24 hour cycle time works for them.

- Taking the same example, we often hear about leaders asking teams to improve some metric like cycle time. Using objective data, we can see whether the number has changed. Subjective data can tell us whether that change was positive or negative for the team.

Objective data tells us what is happening. Subjective data tells us whether it’s a bottleneck for a team.

Do’s and don’ts with developer experience surveys

There are a few important considerations when instrumenting surveys:

Do:

1. Communicate with the engineering teams before the survey. Share the reason why this survey is being sent and expectations for how the results will be used. This is especially important if the organization has been sent other surveys before (for example from HR).

Letting engineering know who the survey is coming from and how it’ll be used will boost engagement.

2. Keep it short. Ideally the survey takes less than 5 minutes to complete. We’ve heard of surveys taking as long as 20 minutes (for example, DORA’s survey was around 20-30 minutes to complete) but these are unlikely to sustain high participation rates over time.

3. Adopt a cadence based on how quickly action can be taken. Developer experience surveys can be run every six to twelve weeks, depending on how quickly teams will take action on the data.

4. Pressure-test questions if you’re writing your own. Roll the survey out to a small group first to check for clarity. Survey measurement is a science, and it’s likely you’ll need to refine your questions.

5. Share results as quickly as possible. Once survey results are available, share that data back with the team along with observations. A fast feedback loop here will help developers trust that their feedback is being heard (which will help participation rates on future surveys).

Don’t:

1. Don’t outsource the process to HR. While partnering with HR to run a developer experience survey frees the DevEx team from having to design or administer the survey, the downsides outweigh the costs. Giving up control over the survey can mean there will be a delay in the time to receive the results, and it can lead to lower participation rates.

2. Don’t blindly adopt full anonymity. This is a conversation teams need to have: should we keep all responses anonymous, be fully transparent, or use partial anonymity? HR surveys are anonymous because they ask for information that may be private. However, developer experience surveys ask developers about the tools and processes they interact with, so there’s a case to be made that team’s should feel comfortable sharing this information. (Of course, always make it clear to participants whether their responses will be anonymous or not.)

Follow up with interviews

Interviews can be useful in two situations: 1) either the DevEx team wants to better understand a problem before deciding whether to focus on it, or 2) the DevEx team has already decided to focus on the problem and they want to understand what developers are doing today when they encounter the problem, as well as any workarounds they use.

If the developer experience survey was partially or fully anonymous, we know which developers are dissatisfied with different tools and processes. These are people that may be more likely to do an interview. DevEx teams can reach out to that group to schedule an interview. (This also may offer a list of likely volunteers to be early users of your solution.)

Here’s an outreach message you can copy to ask for interviews, and a few tips on running the interview:

Example outreach message to ask for an interview:

SUBJ: Getting your take on [topic]

Hi [Name],

We really appreciate you taking the time to share feedback on our [developer

experience survey]. One thing that came to light in the survey was how

[widespread; impactful] a problem [topic] is.

Would you be open to sharing more about what you’re noticing that’s an issue

and when you experience it? It’d be great to do an informal interview to better

understand the problem, that way we can make sure we’re solving it in the right

way.

If you are open to it, feel free to send some timeframes you’re available, or use

my calendar link to schedule a time.

Tips on running the interview from Michael Galloway (Platform engineering leader at Hashicorp, previously at Doma and Netflix):

- Have people building the solution on the call

- The experience should be 1 interviewer and 1 interviewee — observers (other members of the DevEx team) can join but need to be on mute with their video off

- There should be a dictated slack channel for observers to communicate with the interviewer

- Recommended format: 1 hour. 5 minutes for warming up, 50 minutes for discussion, 5 minutes for wrapping up.

- Post interview: transcribe the interview and include the recording in a single folder where all interview recordings will be stored.

Other listening channels DevEx teams use

Here are some other ways DevEx teams gather feedback from their customers:

- Ask/feedback channels. A forum (e.g., Slack channel) where developers can go to submit feedback or questions about internal tools and processes.

- Office hours. A recurring time slot for developers with questions to get hands-on support.

- Customer advisory boards. Host a facilitated meeting to get candid feedback from engineers within a persona (e.g., working on a specific team, using specific tools, experiencing a specific problem).

- Embedded or shadowing. Having developers sit alongside, or work alongside developers to better understand what they’re experiencing.

Share wins and progress

After each survey, share the results and your commentary with the organization. This will help drive future participation as well as adoption for the solutions the team creates. Here’s an email template with prompts that you can copy and use.

For more on designing effective developer experience surveys, read our complete guide: