AI-generated merged code holds steady at ~30%

A preview from our upcoming Q1 AI Impact Report.

Justin Reock

Deputy CTO

This post was originally publishedin Engineering Enablement, DX’s newsletter dedicated to sharing research and perspectives on developer productivity. Subscribe to be notified when we publish new issues.

Each quarter, DX publishes data on how AI is being used at 500+ organizations and the impact it’s having. One metric we’ve been following is the percentage of code that’s written by AI—today’s newsletter shares a preview of what we’re seeing.

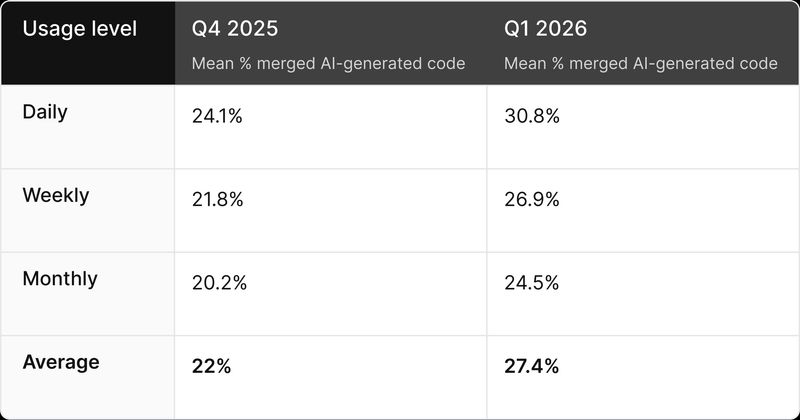

Currently, we measure the “percentage of AI-generated code” by asking developers directly how much of their merged code they believe is written by AI. This measure is captured quarterly by DX. In Q1 2026, developers at more than 500 organizations reported their average percentage of AI-authored code, along with how frequently they use AI tools (daily, weekly, or monthly). We aggregated these self-reported percentages to calculate the mean share of merged AI-generated code for each usage segment and overall.

We ran the previous Q4 analysis in the same way. In the future, we will collect this data and compare against a new system-based measure that automatically tracks the percentage of AI-generated code.

For this quarter, here’s a preview of what we’re seeing. Stay tuned for the full report later this month.

AI-generated code reaching production sees a slight increase

Since last quarter, the average share of merged code authored by AI has moved from 22% to 27.4%. While that’s directionally up, it’s not as meaningful of a change as we had expected.

The stability around 30% could be read two ways. On one hand, it may simply mean that current models and tooling are producing roughly the same proportion of merge-ready code as they were last quarter. However, there’s reason to believe something else is going on. The second half of 2025—particularly November and December—is widely regarded as a turning point for AI-assisted development. Models like Opus 4.5 represented a significant leap in capability, and some have gone so far as to say that anything before November 2025 shouldn’t even be used as a baseline, given how much changed in a short window.

If that’s true, then the more likely explanation for the stability around 30% is that most teams haven’t yet fully adapted to take advantage of those improvements. New models don’t automatically translate to more merged AI code; developers still need to update their workflows, build trust in the output, and find the right use cases for more capable tools.

There’s some evidence to support this interpretation. The daily users segment saw the largest increase in AI-generated code, moving from 24.1% to 30.8%. Meanwhile, weekly and monthly users, who are less likely to have adjusted their habits, saw smaller increases.

Final thoughts

This analysis focuses on how much merged code is AI-generated, but it doesn’t answer questions like whether a higher percentage of AI-generated code is associated with changes in quality. It also begs the question of whether there are certain types of organizations, or groups of developers, that are merging more vs. less AI-generated code.

We’ll explore these questions, and others like them, in the full Q1 AI Impact Report.

Additionally, in future reports we’ll compare this self-reported measure against a telemetry-based metric that tracks AI-generated code directly from version control systems. This will not only provide another lens on AI’s impact, but also give us a more granular view of how patterns are changing over time and where those changes are concentrated.