How DX accelerates engineering leader onboarding

Greyson Junggren

Co-founder, CRO

Stepping into a new engineering leader role—whether as a VP of Engineering, Head of Engineering, or a similar title—is one of the most demanding transitions in a technical career. You’re now accountable for the health and output of your engineering organization, responsible for building high-performing teams, and expected to ship quality software while keeping delivery predictable and morale strong.

In 2026, that responsibility also means owning AI adoption within your engineering org: determining which tools belong in your developers’ hands, ensuring those investments translate into real productivity gains, and making the case to leadership that the resources you’re spending are paying off.

The expectations land fast. Your CTO, CIO, or VP will want to understand where your teams stand, where the bottlenecks are, and how you plan to address them—often before you’ve had a chance to dig into the codebase, sit down with every team lead, or untangle the history behind your most pressing delivery problems.

Traditionally, getting that picture has meant a long ramp: weeks of one-on-ones, manually pulling metrics from a half-dozen tools, and relying heavily on institutional knowledge held by a handful of key people. Most new engineering leaders don’t have that kind of runway before they’re expected to have answers.

With DX, you don’t have to choose between moving fast and understanding what’s actually going on. You can get comprehensive, data-driven clarity from day one—so you can spend less time orienting and more time leading. Here’s how DX helps new engineering leaders hit the ground running, earn trust quickly, and drive real results from the start.

Get the lay of the land quickly

The first thing any new engineering leader needs is an honest read on the organization: where teams are performing well, where delivery is stalling, what’s causing day-to-day friction, and how developers actually feel about the way they work.

In 2026, that assessment also needs to cover AI tooling—which teams are using it, whether it’s genuinely helping, and where it might be creating more noise than signal. Building that understanding the old-fashioned way takes months of interviews, ad hoc surveys, and fragmented data.

With DX, you can establish a grounded, data-driven baseline from the moment you start. Here’s how:

- Unfiltered signal from your developers: DX’s developer surveys achieve a 96% participation rate on average—meaning the data reflects the full team, not just the loudest voices in the room. You’ll get candid, representative feedback on where developers feel productive, where they’re running into walls, and how they’re experiencing AI tools in practice. That’s the kind of context that usually takes months to surface through one-on-ones alone.

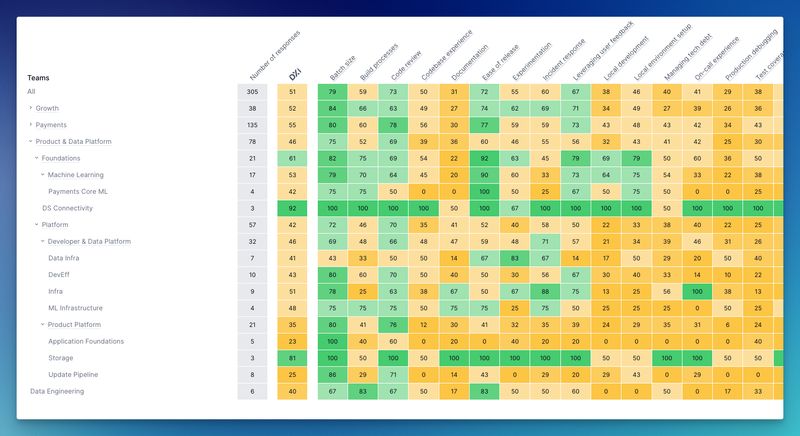

- Team-level visibility into what’s working and what isn’t: DX surfaces specific feedback and metrics at the team level, so you can quickly see which squads are hitting their stride, which are struggling with delivery or tooling friction, and where your attention is most needed. Rather than inheriting a vague sense that “some teams are doing better than others,” you’ll have a clear, structured view within days.

- Benchmarks that put your org in context: DX’s granular benchmarking lets you compare your teams’ performance against peer engineering organizations, so you can quickly distinguish genuine problem areas from industry-wide headwinds—and identify where you have real competitive strengths worth protecting. Instead of spending your first quarter piecing together a picture, you can arrive at your first leadership reviews with a well-informed point of view on team health, delivery patterns, and where the biggest opportunities lie.

Report on the state of your engineering org—up and across

As a new engineering leader, you’ll face reporting demands from two directions at once: your CTO or CIO wants a clear-eyed view of engineering performance and a credible improvement plan, while your peers across product, design, and the business need to understand how engineering capacity is being spent and what they can count on.

Answering both audiences well requires more than gut feel. You need reliable metrics, a coherent story, and the ability to tie engineering activity to outcomes that matter to the business. In 2026, that picture also has to address AI: leadership will want to know whether the tools you’ve invested in are delivering, and your developers will want to know you understand what they’re actually experiencing day to day.

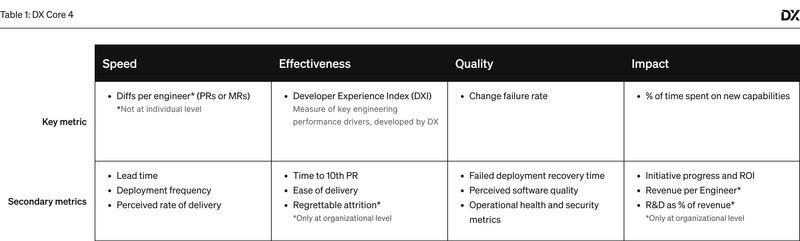

DX’s answer: the Core 4 framework

The DX Core 4 framework measures engineering productivity across speed, quality, effectiveness, and business impact—providing a balanced, defensible view of performance that maps to established models like DORA, SPACE, and DevEx. It gives you a consistent measurement foundation to track improvement over time, and makes it significantly easier to report upward in terms leadership understands and trusts.

With Core 4, you can walk into your first review with your CTO or CIO with solid data on where your org stands, how it compares to industry benchmarks, and a structured plan for what you’re going to improve and why. When the hard questions come—where is capacity going, why is cycle time up, what’s AI actually doing for us—you’ll have real answers.

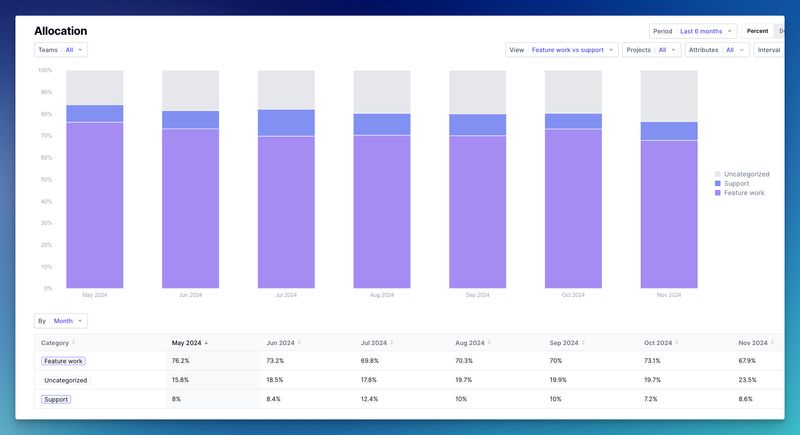

DX also helps you address one of the most common asks from leadership: “Where is engineering time actually going?” Quarterly allocation snapshots give you a visual, shareable breakdown of how capacity is distributed—including time absorbed or freed by AI tooling, the split between new work and technical debt, and whether your team’s allocation matches your stated priorities.

Measuring AI’s impact

AI coding assistants and productivity tools are now a standard line item in engineering budgets—and leadership at every level expects to know whether they’re working. DX gives you the measurement framework to answer that question with precision:

- Adoption metrics: Understand exactly which teams are actively using AI tools, at what frequency, and where organizational or workflow barriers are limiting uptake

- Productivity impact: Measure whether AI is genuinely accelerating delivery and reducing toil, or whether the overhead of adopting new tools is eating into the gains

- Developer sentiment: Find out how your engineers actually feel about the AI tools they’re using—whether they’re a welcome productivity boost or a source of frustration and context-switching

- Quality implications: Track whether AI-assisted development is maintaining code quality and security standards, or quietly introducing technical debt that will cost you later

Armed with this data, you can make confident recommendations to your CTO or CIO on where to double down, what to adjust, and where the org isn’t yet ready to get full value from AI investment.

Identify struggling teams or individuals

One of the highest-leverage moves a new engineering leader can make is rapidly spotting where teams need support—before small problems compound into missed deadlines, attrition, or delivery failures. Teams can struggle for all kinds of reasons: unclear ownership, under-resourced projects, unresolved technical debt, or difficulty adapting to new AI-assisted workflows. Without good visibility, you’re relying on whoever speaks up first.

DX gives you an organization-wide view of where teams are thriving and where they’re under strain—cutting across delivery performance, code quality, and AI adoption friction:

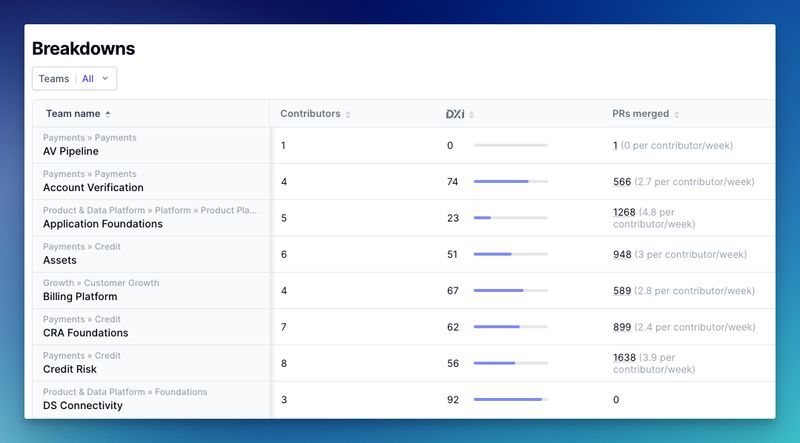

Detailed breakdowns give you ready-to-use reports on key metrics like DORA, cycle time, and throughput, organized by team and individual contributor:

This lets you move from observation to action quickly—identifying where a coaching conversation, a process change, or additional resourcing would have the most impact. You can pinpoint where AI tools are creating unexpected friction and start diagnosing whether the issue is technical, cultural, or tied to how adoption was rolled out.

With DX, you can invest your time and energy where it will matter most—building a resilient, high-performing engineering organization rather than constantly fighting fires.

By the end of your first 30 days, you’ll have a clear view of which teams need immediate intervention, which are strong enough to model best practices for others, and where focused investment will generate the most return.

Your first 90 days as an engineering leader will set the tone for your tenure and establish how much trust you earn from the people above and below you. Spending that time in passive discovery mode—waiting until you feel fully oriented before acting—means being reactive when your teams and your leadership need you to be decisive.

DX changes what those early days look like. You come in with real data, not just a listening tour. You can report upward with confidence in week one, identify your highest-priority interventions within the first month, and begin building the kind of engineering culture and delivery capability that earns lasting credibility.

The difference between a strong start and a slow one usually comes down to information. The right data in week two is infinitely more valuable than a complete picture in month four. Leading effectively from day one—when your teams are watching closely and leadership is forming its first impressions—requires having the right insights at the right moment.

To learn more about how DX can accelerate your impact as a new engineering leader, request a demo today.