New Snapshot questions for measuring AI-driven engineering

The way we develop software is radically changing—and with it, what developer experience means is changing too.

Over the past few months, we’ve added new questions to DX Snapshots covering AI-authored code and AI time savings. These have helped hundreds of organizations begin to understand how AI is showing up in their engineering workflows. But AI isn’t standing still, and neither is the SDLC it’s reshaping. Leaders are moving past “are developers using AI tools?” and “is AI saving time?” toward harder questions: “are these tools actually reliable?” and “how much work are agents doing on their own?”

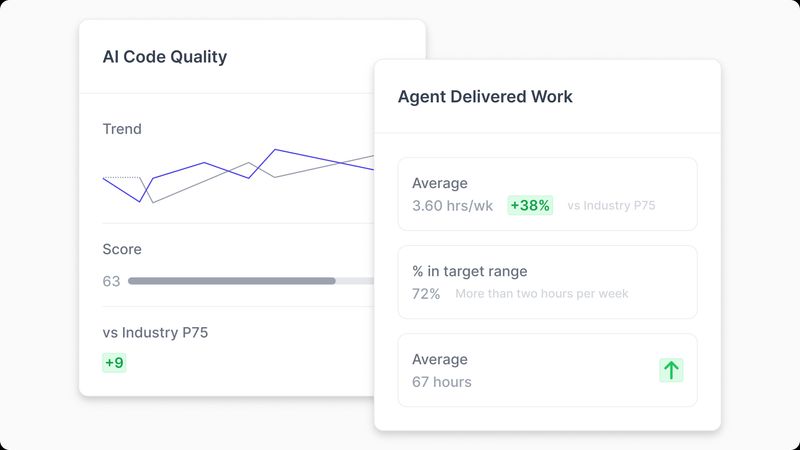

Today, we’re introducing two new snapshot questions designed to help organizations measure the effectiveness of AI-driven engineering: AI code quality and Agent-delivered work.

AI code quality

AI code quality helps organizations understand how reliably and effectively their AI tools are able to generate code.

Not all AI-generated code is created equal. Some tools and configurations produce clean, functional output that developers can accept with minimal modification. Others generate code that looks plausible but requires significant rework—or worse, introduces subtle bugs that only surface later. Understanding this variance is the difference between accelerating delivery and accumulating hidden technical debt.

This question captures developer sentiment on the quality of AI-generated code, providing a signal that system-level metrics alone can’t surface. A high volume of AI-generated code means very little if developers are spending their time fixing what the AI produced.

How to use this data: Organizations should use AI code quality scores to inform investments in AI readiness—including improved context management and harnesses that enable AI tools to be more effective. Low scores are a signal to dig deeper: it might be a tooling issue, a context issue, or both. Either way, it’s actionable.

Agent-delivered work

Agent-delivered work helps organizations measure how much work autonomous agents are contributing to their software delivery.

This is distinct from AI time savings, which measures amplification—how much faster developers work with AI assistance. Agent Delivered Work measures augmentation—how much additional engineering capacity agents are providing on their own.

The distinction matters. A developer who uses Copilot to write code faster is being amplified. An agent that independently completes a task, from ticket to PR, is augmenting the team’s capacity. As organizations move toward agentic workflows, understanding this shift is critical for planning, staffing, and investment decisions.

How to use this data: Organizations can use Agent-delivered work to measure progress toward agentic software delivery and find ways to more fully leverage agents. Teams with low augmentation scores may have untapped potential in how they assign and configure agent workflows. Teams with high scores are building a playbook that others in the organization can learn from.

Availability

Both new Snapshot questions are available starting today for all DX customers. Industry benchmarks for AI code quality and Agent-delivered work will become available in early Q3.

If you’re an existing DX customer, these questions will appear in your next snapshot configuration. To learn more about how to incorporate them into your AI measurement strategy, reach out to your account representative or request a demo.