Introducing Fabric: The foundation for AI-native engineering

Ana Wishnoff

Product manager

Engineering organizations have invested heavily in AI coding tools over the past two years. Our recent research across 400+ companies shows the results: AI adoption is up 65%, but engineering velocity has increased by only about 8% at the median. The gap between investment and impact is widening, and the reason isn’t the tools themselves.

Our data points to a foundational problem. AI amplifies existing engineering practices, both good and bad. Teams with clear ownership, strong documentation, and reliable CI/CD are more likely to see real productivity gains. Teams without those foundations find that AI can exacerbate problems: generating code that doesn’t fit the environment, missing dependencies, and creating rework and tech debt. AI is only as effective as the context it has, and for many organizations, that context is fragmented, outdated, or missing.

We built Fabric to close this gap.

Fabric gives engineering leaders a single, live view of every service’s engineering standards, ownership, documentation, test coverage, CI/CD, security controls, and connects that information directly to their AI tools. When a service falls short of a standard, teams can point an AI agent at the gap, and it will automatically cut a PR to fix it.

Fabric makes it easy to:

1. Map your software ecosystem and feed context to AI tools. The catalog gives you a centralized, structured view of your software entities (services, repositories, libraries) along with their dependencies, ownership, and associated tooling. This creates a rich context layer for AI agents, so they operate with full architectural awareness rather than guessing.

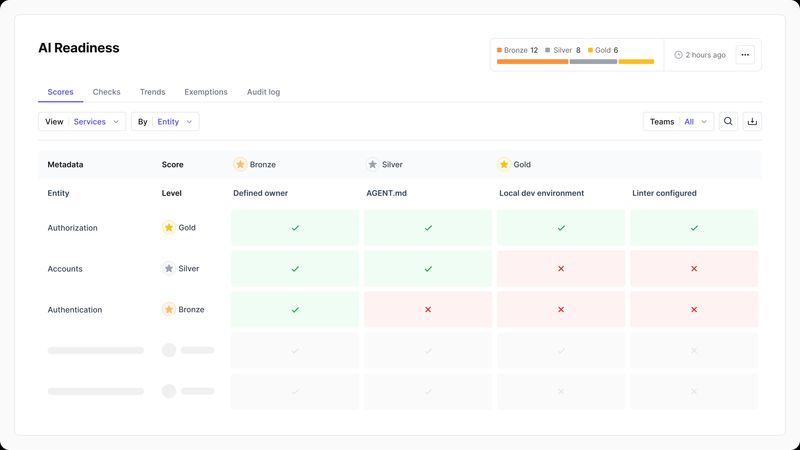

2. Find development bottlenecks and areas for improvement. Scorecards let you define the engineering standards that matter to your organization (reliability, security, documentation, CI/CD hygiene) and measure how every service performs against them.

For example, you could use pre-built AI Readiness Scorecards to confirm you have the security controls, automated tests, and deployment processes needed to scale AI safely. Which would let you see, at a glance, which services meet the bar for autonomous AI workflows and which ones still require human review and intervention.

3. Drive action to close the gaps. Scorecards highlight where each service falls short of your engineering standards. Teams can then use tools like the DX CLI to point AI agents at failing checks, so they can understand what’s wrong and automatically cut PRs to bring services back into compliance.

Our research shows the ceiling on AI productivity gains isn’t less about the tools, and more about the engineering foundations that the tools depend on. The organizations that capture the next wave of AI-driven productivity will be the ones that invest in those foundations systematically, not the ones that buy another coding assistant and hope for the best.

To learn more about Fabric, request a demo or watch our live guided walkthrough of Fabric.